Identifying blocking is one of the most basic things that a DBA should be monitoring for. I previously wrote about how to identify blocking in SQL Server and find the leading blocker. Recently, my friend Jim Donahoe (blog | twitter) asked me about setting up email alerts related to blocking, which prompted me to clean up some additional code I have that does just this. If you haven’t already read my previous post, you should probably do that now.

Blocking is OK. Excessive blocking is bad.

Think of blocking within the database like traffic lights or a stop sign controlling the flow of traffic on the roads. It’s normal to have to stop at an intersection, let some other folks go, then it’s your turn, and you’re off on your way. However, when the roads are busy, you might have to sit through several light cycles before you get through the intersection and are moving again. Or what happens when a bus doesn’t make it all the way through the intersection and blocks the cross traffic. Well, it’s that excessive blocking that DBAs need to identify and fix in the databases under our watch.

If you have monitoring software, that software probably has monitoring & alerting built in for blocking–but not everyone has every server monitored. For one, monitoring software isn’t free–and for lower-priority servers it might not be in the budget. As part of my home-grown DBA database, I have some stored procedures that alert so that I can handle alerting on the basics, even when full-blown monitoring isn’t in the cards.

What do you need in an alert?

I have a few basic requirements when I’m making an alert. They seem pretty straightforward, but I’m constantly bumping into alerts that don’t meet these requirements. In this context, an alert equals an email.

- Only alert if there’s actually a problem that is actionable. Please don’t throw email alerts at me if there’s nothing for me to do. Don’t be the boy who cried wolf. You probably already have an email rule that ignores alerts you don’t care about. Don’t make more spam.

- Only alert if the problem is critical. In addition to my #1 requirement that it be actionable, I should have to act now. Email alerts are a cry for help, and aren’t appropriate for every problem. For lower-priority problems, I prefer digest reports, where I can set aside time in my day to work on many lower-priority issues and take care of them all at once.

- Give me all the info I need, but just the info I need. It’s 9 o’clock on a Saturday, and your database alerts start to roll in while you’re making love to your tonic and gin. It’s imperative that you’re able to triage problems directly from the alert, and ideally take action without having to do a bunch of investigation to figure out what to do.

- Alert promptly. We’ve already established that we’re dealing with critical problems–if it’s critical, then I need to know now. In particular, I need to know before I find out from end users that they have a problem. Ideally, I can fix it before anyone else notices.

- Don’t over-alert. How many email alerts do you need on the same problem? You probably don’t need to send an email every 30 seconds–but it can also be helpful to alert periodically to let you know a problem is ongoing.

It’s not a lot to ask for, but it does require we do a little bit of extra work.

Let’s build an alert

Identify actionable problems

We’ve already done the heavy lifting in dbo.Check_Blocking to identify excessive blocking. When we have excessive blocking, the action is probably to kill a session, or stop an application process, or tell a coworker to cease & desist.

Identify critical problems

You’ll still need to determine what the right thresholds are for the @BlockingDurationThreshold and @BlockedSessionThreshold–every environment and application might have different thresholds. How much blocking is normal in your environment? How much blocking is acceptable? Do nights & weekends have different critical thresholds than the business day? Are there maintenance windows during which you ignore problems?

I can’t answer any of those questions for you. You’ve got the two parameter knobs to turn, and we’re going to schedule a job to run the procedure, so you’ll need to determine your own job schedule. I frequently use two different schedules — one that runs during business hours, then another with more lax thresholds that runs nights & weekends.

Getting the right data points

We’ve already done the work with the dbo.Check_Blocking procedure to get the right data points, so we’re done, right? Not so fast. We’ve got more work to do.

A couple years ago, I wrote about the importance of sending pretty emails. We’re going to use my dbo.EmailCss() function from that post to help format the email. The CSS generated by that function will define styles for fonts & formatting for HTML tables. This helps make sure that we’re not only presenting the right info, but we’re also doing it in a way that makes it easy to digest. Every email alert that I send from SQL Server starts with this CSS before I even build the HTML email body.

In my dbo.Check_Blocking procedure, I used an XML field to SessionInfo for all of the sessions involved in the blocking chain. I’m going to make some modifications to that code, and this time, I’m going to generate HTML instead, including some formatting to make sure it’s all pretty:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| — Populate SessionInfo column with HTML details for sending email | |

| — Since there's a bunch of logic here, code is more readable doing this separate than mashing it in with the rest of HTML email creation | |

| UPDATE lb | |

| SET SessionInfo = '<span style="font-weight:bold">Login = </span>' + LoginName + '<br>' + | |

| CASE WHEN TransactionCnt <> 0 | |

| THEN '<span style="font-weight:bold">Transaction Count = </span>' + CAST(TransactionCnt AS nvarchar(10)) + '<br>' | |

| ELSE '' | |

| END + | |

| CASE WHEN WaitResource <> '' | |

| THEN '<span style="font-weight:bold">Wait Resource = </span>' + COALESCE(WaitDescription,WaitResource) + '<br>' | |

| ELSE '' | |

| END + | |

| '<span style="font-weight:bold">Host Name = </span>' + HostName + '<br>' + | |

| CASE WHEN DbName <> '' | |

| THEN '<span style="font-weight:bold">DbName = </span>' + DbName + '<br>' | |

| ELSE '' | |

| END + | |

| '<span style="font-weight:bold">Last Request = </span>' + CONVERT(varchar(20),LastRequestStart,20) + '<br>' + | |

| '<span style="font-weight:bold">Program Name = </span>' + ProgramName + '<br>' | |

| FROM #LeadingBlocker lb; | |

| UPDATE b | |

| SET SessionInfo = '<span style="font-weight:bold">Login = </span>' + LoginName + '<br>' + | |

| '<span style="font-weight:bold">Host Name = </span>' + HostName + '<br>' + | |

| CASE WHEN TransactionCnt <> 0 | |

| THEN '<span style="font-weight:bold">Transaction Count = </span>' + CAST(TransactionCnt AS nvarchar(10)) + '<br>' | |

| ELSE '' | |

| END + | |

| CASE WHEN WaitResource <> '' | |

| THEN '<span style="font-weight:bold">Wait Resource = </span>' + COALESCE(WaitDescription,WaitResource) + '<br>' | |

| ELSE '' | |

| END + | |

| '<span style="font-weight:bold">DbName = </span>' + DbName + '<br>' + | |

| '<span style="font-weight:bold">Last Request = </span>' + CONVERT(varchar(20),LastRequestStart,20) + '<br>' + | |

| '<span style="font-weight:bold">Program Name = </span>' + ProgramName + '<br>' | |

| FROM #Blocked b; |

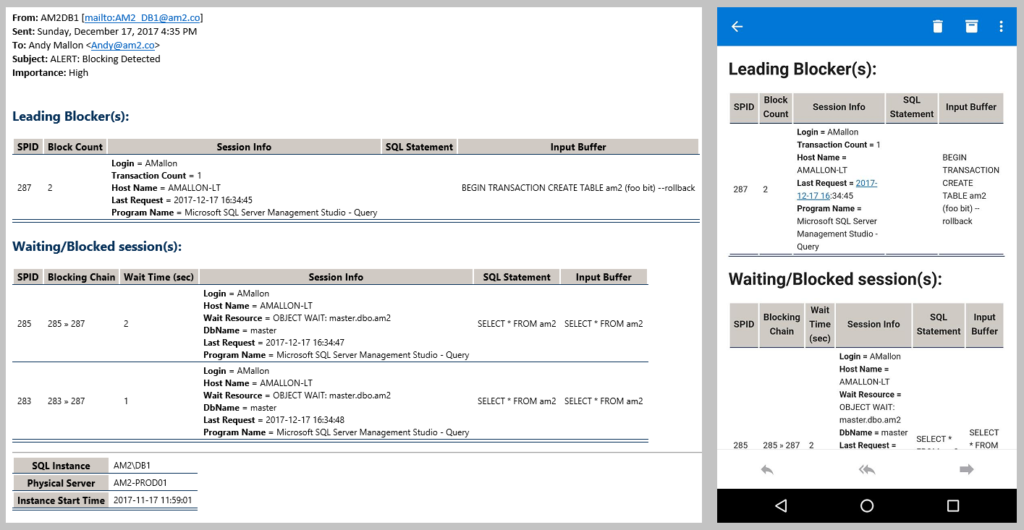

There’s one more critical piece of info that we need to add to our alerts. What SQL Instance is sending these alerts? If it’s a Failover Cluster Instance, which node is the instance running on? Has it failed over or restarted recently? What’s the instance start time? Similar to the way I use a function to generate standard CSS, I use a function to generate a standard footer, dbo.EmailServerInfo_Get().

Alert promptly

This is pretty easy. We’ll just schedule a job to run frequently. Depending on what you determined was your critical threshold, you might set this alert to run as frequently as every minute.

For most people, “scheduling a job” means setting up a SQL Agent job to run the procedure. If you’re using Azure SQL DB (which has neither DB Mail nor SQL Agent), or if you just love PowerShell, you might want to use PowerShell to centrally run this alert and send emails. (This actually isn’t a bad idea. Depending on the nature of your problem, DB Mail might not be able to send an email.) In this case, simply run the procedure with @Debug=2. With that parameter value, the procedure just returns a single-row, single-column result set that is an HTML blob. Just use that HTML blob as the body of your email and send it along from PowerShell.

Don’t over-alert

I just told you to schedule the job to run every minute. That means when there is blocking, you’re going to get an email every minute, right? WRONG. Each time dbo.Alert_Blocking sends an email, it logs that to a table(dbo.Monitor_Blocking). Each time it identifies blocking, it checks that table to see if it recently sent an email alert. If it recently sent an email, then it snoozes itself & won’t re-alert. The @EmailThreshold parameter controls how long to snooze alerts. Simply pass in how many minutes to snooze between emails. As a bonus, this table can be used as a time machine to look back at blocking problems over time.

Gah, you just threw a lot at me, Andy

OK, OK, OK… I threw a LOT of info at you in a relatively short post (plus those two other posts I referenced). So what do you actually need to do to set up this alert?

If you install my full DBA database, then you have everything you need. Instructions to install the whole thing are in the project’s README. If you want to install just the bits for this alert, there are a few objects you’ll need out of that project:

- dbo.EmailCSS_Get() – This scalar function provides the CSS to ensure the email alerts are well-formatted and easy to read.

- dbo.EmailServerInfo_Get() – This scalar function provides the HTML for the footer of the email with standard server info.

- dbo.Monitor_Blocking – This is the table used to throttle emails & for historical purposes.

- dbo.Alert_Blocking – This is the procedure that actually does the work, and the procedure you’ll schedule to send your alerts.

Now that it’s installed, let’s see the alert

Here’s a sample email, shown in the Outlook 2016 Desktop app, and Outlook for Android:

If you try using this let me know how you like it. If you find problems, let me know in the comments below, or by logging an issue on GitHub.

And remember: If a developer is causing blocking, kill the session, don’t kill the developer.

Nice report. Thank you.

Thanks for the nice article but when it insert into table i dont see any value for Inputbuffer or sql statement, both are coming null/empty.

The inputbuffer doesn’t actually get stored on the table–when I added inputbuffer to the output, I didn’t add it to the table.

That said, the sqltext & sqlstatement will be the same in the table as they are in the output. Have you tried running it with debug=1, or running the Check_Blocking procedure?

thank you, will apply this for my environment.

Usefull and simple…you’re great again.

Andy, I found this very interesting as we have put together something very similar indeed. One minor point and one question.

The minor point; in your “–Decypher wait resources.” section, you’re handling KEY, OBJECT, PAGE and RID but not TAB (which seems to come as DBID:ObjectID:IndexID) or no type (DBID:FileID:PageID as far as I can see). Any reason?

Question. If I understand it correctly, you’re displaying the details of the blocking statement. We were doing this and getting some details which were very hard to explain so I asked a question over on SQL Server Central, and got a very helpful reply from Gail Shaw; https://www.sqlservercentral.com/Forums/1878042/Finding-the-cause-of-blocking-between-processes. In summary:

Q: So what you’re saying is that all we can infer from the results of the query is that one process is being blocked by some statement which has already run (or may still be running) in the transaction of another process. That statement may have already completed – it’s not necessarily the one currently running.

A: Yes.

Would be very interested to hear your thoughts.

I don’t decipher the TAB locks because I don’t usually see them in my environment, so I’ve never bothered to do that. I’ll look into adding that at some point in the future, or if you want to give it a crack & do a Pull Request in GitHub, that’s cool, too!

That’s a great point about the SQL Statements that I’m showing in the output. I’m showing the *current* SQL Statement/input buffer for the session that owns the lock. There is no guarantee that the statement you see is the statement that created the lock. You might have some additional work to find the executing statement, then track backwards in code to find the code that took the lock which is causing blocking.

In this scenario:

* BEGIN TRAN

* UPDATE a row

* Run a really long-running SELECT statement

You would likely see the really long-running SELECT, even if the blocking is due to the UPDATE. However, in this case the wait resource would give an indication that the UPDATE was the culprit. This is a case where the tool makes it easier for you to find the problem, but can’t do all the work for you.

Before I try to implement your blocking report. We have a scenario where we have a couple MERGE statements that cause blocking on a data warehouse. That is not the issue. The issue is another job that runs behind that blocking that causes cascaded blocking. So our monitoring tool Spotlight does not alert on secondary blocking, just the primary. Does your report or process catch secondary blocking chains?

Yup, my alert will discover the full blocking chain. If you look at the other post that shows the details on how I identify the leading blocker (https://am2.co/2017/10/finding-leader-blocker/), there’s an image in that post that shows how I treat deep blocking chains (this image: https://wp.me/a7ZEjd-jj).

In your scenario, the MERGE would show up as the “Leading Blocker(s)” section, then the other job & cascading blocking would all show up in the “Waiting/Blocked Session(s)” section. The way I display the blocked sessions, it should be pretty easy to identify that second-tier blocker and separate it from the other noise.

Give it a try, and if you’ve got feedback on how to make it better, I’d love to hear.

I have tried your procs to replace my more verbose version to see how yours works, but I have seemed to stumbled upon a race condition. It would throw an error ever so occasionally. When I finally saw the message (some happened in the middle of the night, and my Agent logs are only good for about a half hour), I think I tracked it down. The message was “Invalid SPID 137 specified. [SQLSTATE 42000] (Error 7955). The step failed.”

The execution of dbcc inputbuffer can throw an error if the SPID has terminated by the time it runs. So, I put it in a Begin Try/End Try/Begin Catch/End Catch block. In the Catch portion, I simply chose to insert a dummy record in it’s place.

Insert Into #InputBuffer(EventType,Params,EventInfo) Values(N’Invalid SPID’, 0, Error_Message())

Thanks for the tip. I’ll look at adding the Try/Catch around that `DBCC INPUTBUFFER` call so that it can be rolled into the main proc.

Andy, what do you think of adding an input parameter to EmailServerInfo that accepts the calling object name, and then appending that in the output after the start time.

ALTER FUNCTION [dbo].[EmailServerInfo_Get](@CallingObjectName VARCHAR(255))

…..

IF ISNULL(@CallingObjectName, ”) ”

SELECT @ServerInfo = @ServerInfo + ‘

Initiating Object Name

‘ + @CallingObjectName + N’

‘

SELECT @ServerInfo = @ServerInfo + ”;

calling proc:

SELECT @ObjectName = OBJECT_NAME(@@PROCID)

SELECT @EmailBody = @EmailBody + ” + dbo.EmailServerInfo_Get(@ObjectName);

I find the calling object name helpful when troubleshooting. Ideally I’d pass in a link to a runbook too, but…. 🙂

Hello Andy, Thanks for the Great scripts. Loved it. However i do not see DB name in the table as well as blocking report for lead blocker section?

Hi!, excelent post!, so, i’ve installed four components (functions, Table and Store Procedure) but , how to run this process?? because, i don’t see in the SP the call to the email function, so, i tried to run SP and i’ve recieved this messages and error: DBCC execution completed. If there are error messages, consult your system administrator.

Message 9400, level 16, state 1, procedure Check_Blocking, line 336 [start line of lot 2] XML analysis: line 1, character 428; unexpected end of entry

I will apreciate your feedback!

Regards

Pablo, from Argentina

Hi Pablo–

From the error message you included, it looks like you’re using the `dbo.Check_Blocking` procedure, this post is about the `dbo.Alert_Blocking` procedure. Make sure you’re calling the right one! If you look at `dbo.Alert_Blocking.sql`, you’ll see line 509 is the call to `sp_send_dbmail`.

Regarding your error message, I’d need a little more to go on. That error is probably coming from the spot where I build the XML session info column. I haven’t seen that error before, but if there’s something wonky with that XML, I’ll probably need some clue to reproduce and fix it. If you see anything suspicious, or are able to reliably reprice the issue, please log an issue over on GitHub: https://github.com/amtwo/dba-database/issues

Hi Andy!, i’ve can configurate Alerts!, the emails show a very nice Block report!

Thanks a lot!

Regards.-

Hi Andy, I’ve installed four scripts[dbo.EmailCSS_Get(), dbo.EmailServerInfo_Get(), dbo.Monitor_Blocking & dbo.Alert_Blocking] and manually created blocking of two-three sessions. When I execute a table:[DBA].[dbo].[Monitor_Blocking] I’m receiving the output but when I’m running SP:[dbo].[Alert_Blocking] I’m receiving following email,

”

SQL Server Alert System: ‘Respond to Blocking’ occurred on \\VCSSQLDB01\PRODSQL01

SQL Backup Job Failure Alert_Automated Notification

DATE/TIME: 1/27/2020 1:48:04 PM

DESCRIPTION: SELECT * FROM BLOCKED_PROCESS_REPORT

COMMENT: (None)

JOB RUN: (None)

”

I’ve tried changing @Debug to 1 & 2 but still it is throwing the same email alert.

Regards,

Ganesh

Without an actual error message, it’s hard for me to guess, and I can’t really debug your issue from here. It looks like something simply isn’t configured correctly. You might try setting it up again from scratch to make sure you have everything installed. I strongly suggest grabbing the full repo from Github, and using the install process outlined in the readme.

If you can reliably reproduce the issue, I’d suggest you log an issue on Github.